New discoveries #15

Scale-MAE, labs-gpt-stac, ChaBuD challenge, BioMassters winners announced & Marine Debris dataset

Welcome to the 15th edition of the newsletter. I'm delighted to share that the newsletter now has 5k subscribers 🔥

Scale-MAE

In the paper Scale-MAE: A Scale-Aware Masked Autoencoder for Multiscale Geospatial Representation Learning, the authors present Scale-MAE, a pretraining method designed to explicitly learn relationships between remote sensing data at different spatial scales. This is particularly important for remote sensing applications, where images are acquired at various scales and often require multiscale analysis for accurate interpretation.

Scale-MAE works by masking an input image at a known scale, with the scale being determined by the area of the Earth covered by the image, rather than the image resolution. The masked image is then encoded using a standard Vision Transformer (ViT) backbone. During the decoding process, the masked image is passed through a bandpass filter to reconstruct low/high-frequency images at lower/higher scales.

The authors evaluate the quality of representations from Scale-MAE by freezing the encoder and performing a nonparametric k-nearest-neighbor (kNN) classification with eight different remote sensing imagery land-use classification datasets with different GSDs, none of which were encountered during pretraining. Scale-MAE demonstrates considerable improvements compared to current state-of-the-art approaches, and achieves an average of 2.4−5.6% non-parametric kNN classification improvement. Furthermore, the method also obtains a 0.9 mIoU to 1.7 mIoU improvement on the SpaceNet building segmentation transfer task across various evaluation scales.

This novel approach to pretraining for remote sensing applications has the potential to significantly enhance multiscale analysis, enabling more accurate and efficient processing of remote sensing imagery.

Authors: Colorado J. Reed, Ritwik Gupta, Shufan Li, Sarah Brockman, Christopher Funk, Brian Clipp, Kurt Keutzer, Salvatore Candido, Matt Uyttendaele, Trevor Darrell

labs-gpt-stac

Numerous imagery archives employ a STAC API for data access. However, executing STAC queries necessitates programming skills, restricting the accessibility of these assets to individuals with technical expertise. labs-gpt-stac is a novel codebase that showcases an innovative approach, utilizing ChatGPT to generate STAC queries. This breakthrough eliminates the need for programming knowledge, significantly simplifying access to and usage of geospatial data for a wider audience.

💻 Code

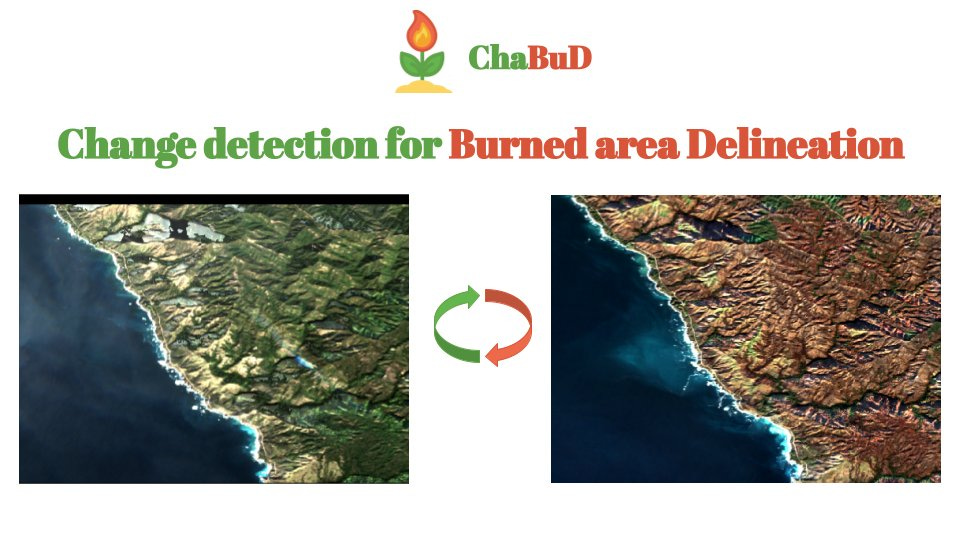

ChaBuD challenge

The ChaBuD Challenge introduces a binary image segmentation task focused on forest fires in California, utilising Sentinel-2 L2A satellite imagery. The objective is to predict whether a specific region has been affected by a forest fire, based on satellite images acquired before and after the fire event. By incorporating temporal information, participants are encouraged to develop robust change detection models that effectively identify areas impacted by wildfires. Through the successful development and implementation of these change detection models, researchers and decision-makers can better understand wildfire patterns and allocate resources for environmental restoration and future prevention.

🗓️ June 10, 2023: end of the challenge

🥇 1st place winner: one full registration to the ECML/PKDD 2023 Conference

BioMassters winners announced

Shared in the 3rd Newsletter, the goal of the BioMassters challenge was to estimate the yearly biomass of 2,560 meter patches of land in Finland's forests using Sentinel-1 & 2 imagery. Over the course of the competition participants tested over 1,000 solutions, and the winners achieved a substantial improvement in performance over the benchmark model.

To build high-performing models, entrants experimented with a range of model architectures, data augmentation strategies and feature engineering approaches. Participants were given monthly images each from S-1 and S-2 to estimate yearly biomass, and interestingly the first and third place winner chose to aggregate these monthly images rather than using a time-series approach. The second place winners used a time-series approach by treating time as a third dimension and passing the 3-D features they created into a 3-D image segmentation model. All three finalists used some variation of a UNet architecture, which is among the most popular for computer vision tasks.

MARIDA: Marine Debris dataset on Sentinel-2 satellite images

MARIne Debris Archive (MARIDA) is a marine debris-oriented dataset on Sentinel-2 satellite images. It also includes various sea features that co-exist. MARIDA is primarily focused on the weakly supervised pixel-level semantic segmentation task.

Video: Change detection with TorchGeo by Caleb Robinson

Excellent video from the IEEE GRSS First IADF School on Computer Vision for Earth Observation

Poll

In the previous poll I asked if people in the remote sensing community use AI assistants like Github Copilot to assist in their programming. Just under half the respondents (37%) do use these tools regularly, whilst the majority have only experimented with them (41%) or have never used them (22%). I also use ChatGPT as an assistant when I read papers, to either answer questions I have that are raised by the paper, or to rephrase & break down new concepts in the paper. In this poll I want to find out how and if ChatGPT (and variants) are being used in the context of reading papers